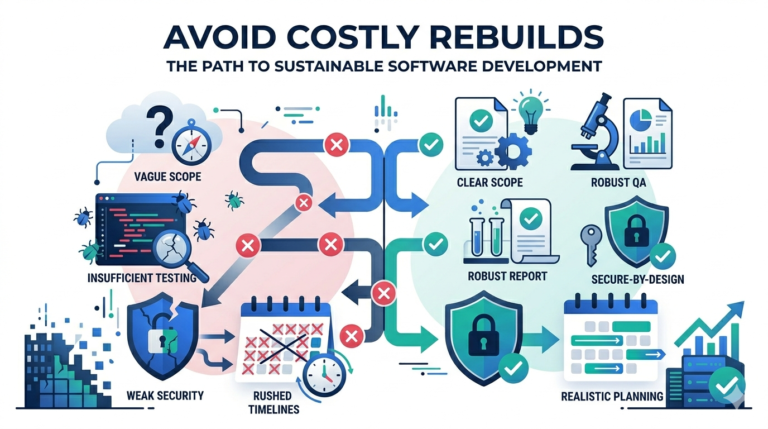

The real problem: most failures don’t start in code

A custom build rarely fails because developers “can’t code.” It fails because teams build the wrong thing, define success too loosely, or let complexity grow faster than clarity. A typical story goes like this: an MVP is approved quickly, deadlines are aggressive, and everyone agrees to “figure things out during development.” Two sprints later, stakeholders disagree on core workflows, integrations weren’t scoped properly, and the team is stuck rebuilding features that were already “done.” That’s when timelines slip, budgets expand, and trust drops.

The good news is that most of these issues are predictable. Once you learn the warning signs, you can prevent them with a few disciplined practices that don’t slow you down they speed you up.

Mistake 1: Building before defining what “done” means

Symptoms: Work keeps coming back for revisions, stakeholders say “this isn’t what I meant,” and features feel 90% complete for weeks. QA finds “bugs” that are actually requirements misunderstandings.

Root cause: The team is missing acceptance criteria. Requirements exist as vague goals (“build reporting,” “add dashboard,” “improve onboarding”) instead of testable outcomes. Developers and stakeholders each interpret the same feature differently.

Fix: Convert every major feature into a workflow and define acceptance criteria in plain language. For example: who can access it, what happens on success, what happens on failure, and what data is shown. When “done” becomes testable, approvals become faster and rework drops.

Prevention: Before sprint work begins, confirm that every high-impact ticket has clear acceptance criteria and a single owner who can approve it.

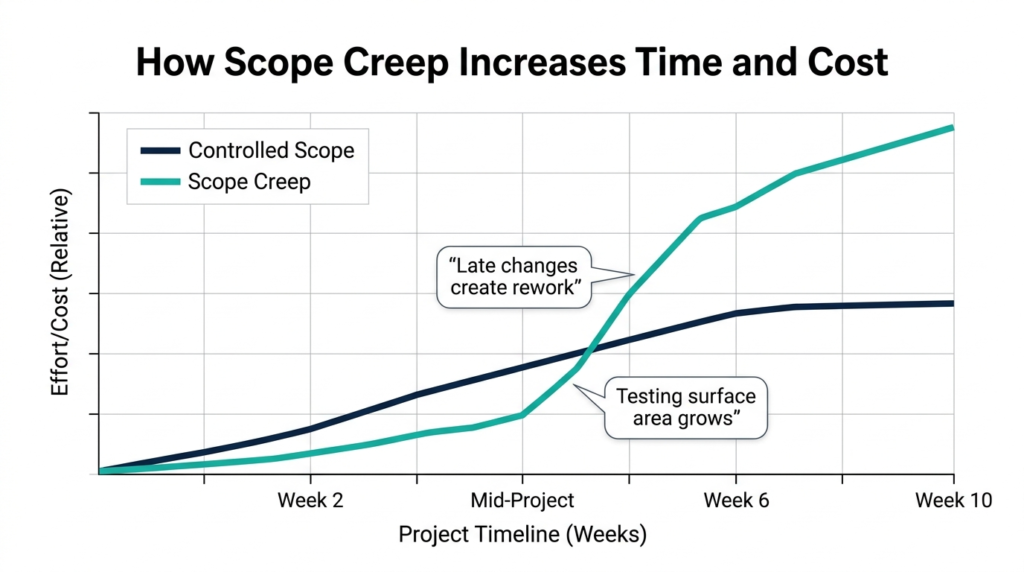

Mistake 2: Treating MVP as “the full product, just smaller”

Symptoms: The MVP takes months longer than expected, the backlog grows faster than it closes, and the product launches late without clear proof it solves the core problem.

Root cause: Teams confuse “minimal” with “feature-rich.” They build multiple user roles, advanced dashboards, deep automation, and many integrations before validating the core workflow. Every extra feature multiplies testing, edge cases, and review cycles.

Fix: Redefine MVP as “the smallest release that proves value.” Identify one core workflow that your product sells and build only what supports it. Everything else becomes Release 2.

Prevention: Create a hard MVP boundary. If something new enters scope, something else must move out. That rule alone protects timelines.

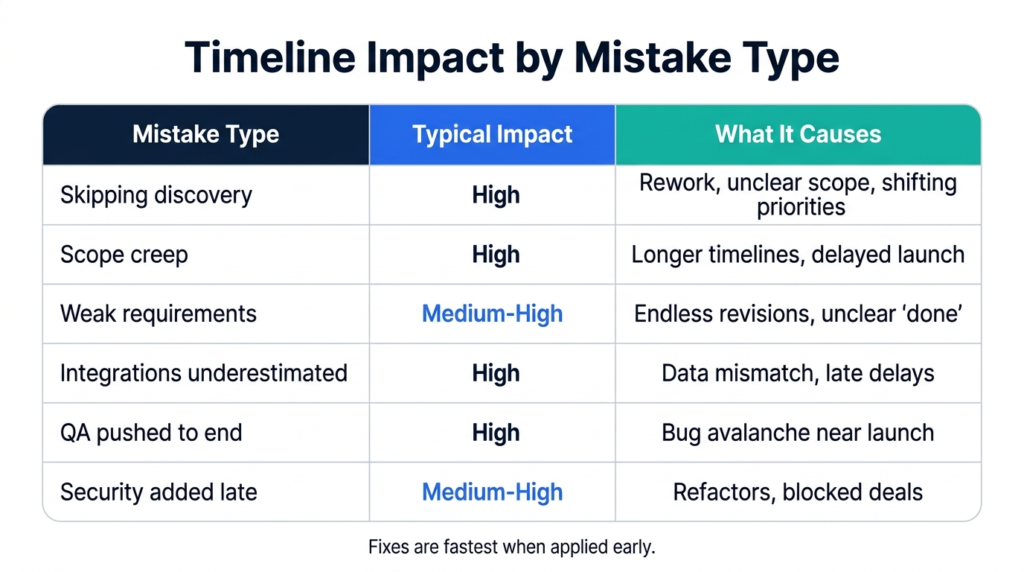

Mistake 3: Skipping discovery to “save time”

Symptoms: Early development looks fast, then stalls. Integrations appear late. Stakeholders change priorities mid-build. The team keeps rewriting the data model and workflows.

Root cause: Discovery is treated as optional, but discovery is where clarity is created. Without it, teams build on assumptions. Assumptions break once real users, real data, and real workflows show up.

Fix: Run a short discovery phase focused on outcomes, workflows, and risks. Define what must be built now, what can wait, and what’s unknown. Document integrations and data sources early.

Prevention: Don’t start production code until you have: (1) workflow map, (2) MVP boundary, (3) acceptance criteria, and (4) a risk list. This is the foundation of sustainable custom software development.

Mistake 4: Underestimating integrations and data migration

Symptoms: “Connecting the CRM” takes weeks. Data doesn’t match. Reports are wrong. Users complain because records are missing or duplicated.

Root cause: Integrations are often scoped as simple API connections, but real systems contain messy data and inconsistent identifiers. Migration from spreadsheets or legacy tools reveals duplicates, missing values, and conflicting formats. These issues usually appear late if they aren’t tested early.

Fix: Treat integrations as first-class scope. Define what data moves, in which direction, how often, and which system is the source of truth. Run migration tests early with real sample data and create an integration layer with logging so failures are visible.

Prevention: Make “integration readiness” a milestone, not a footnote. If your product depends on external systems, validate them in discovery not after the UI is built.

Mistake 5: Choosing technology based on trends, not fit

Symptoms: Delivery slows because the team is learning the stack while building. Hiring becomes difficult. The system becomes expensive to operate. Architecture feels brittle.

Root cause: Teams pick a stack because it’s popular, because a developer prefers it, or because competitors use it. But the best stack is the one your team can maintain and scale while meeting security, integration, and performance requirements.

Fix: Choose tech after discovery. Evaluate constraints: expected load, integrations, compliance needs, team skills, and long-term maintainability. Prefer stable, well-supported frameworks unless a newer tool provides a clear, documented benefit.

Prevention: Ask one question before finalizing: “Can we hire and maintain this stack for the next 2–3 years without pain?” If the answer is uncertain, simplify.

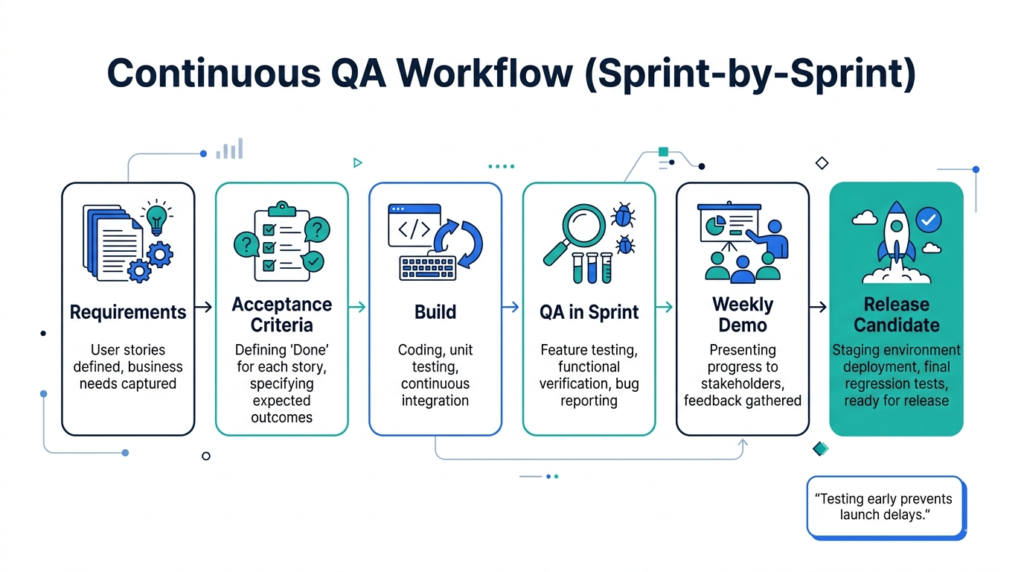

Mistake 6: Pushing QA to the end

Symptoms: Launch is delayed by a flood of bugs. Teams do late-night fixes. Releases become risky. Users face broken flows in production.

Root cause: Testing is treated as a final stage instead of a continuous habit. Late QA means issues stack up and debugging becomes harder because many changes land at once. Performance problems also appear late, because nobody tested real-world usage early.

Fix: Bake QA into every sprint. Test core workflows as they are built and automate tests for critical paths (auth, payments, data updates). Keep a staging environment that mirrors production settings and run regression checks before release candidates.

Prevention: Make “test passing” part of the definition of done. If a feature isn’t testable, it isn’t ready.

Mistake 7: Security added late (the most expensive kind of rework)

Symptoms: Enterprise buyers ask for audit logs, RBAC, and SSO readiness. You scramble. Permissions are inconsistent. Sensitive data is exposed through APIs. Security reviews block deals.

Root cause: Security decisions affect architecture from day one. If you bolt security on later, you often need to refactor workflows, APIs, and data access patterns. This is especially painful for B2B products.

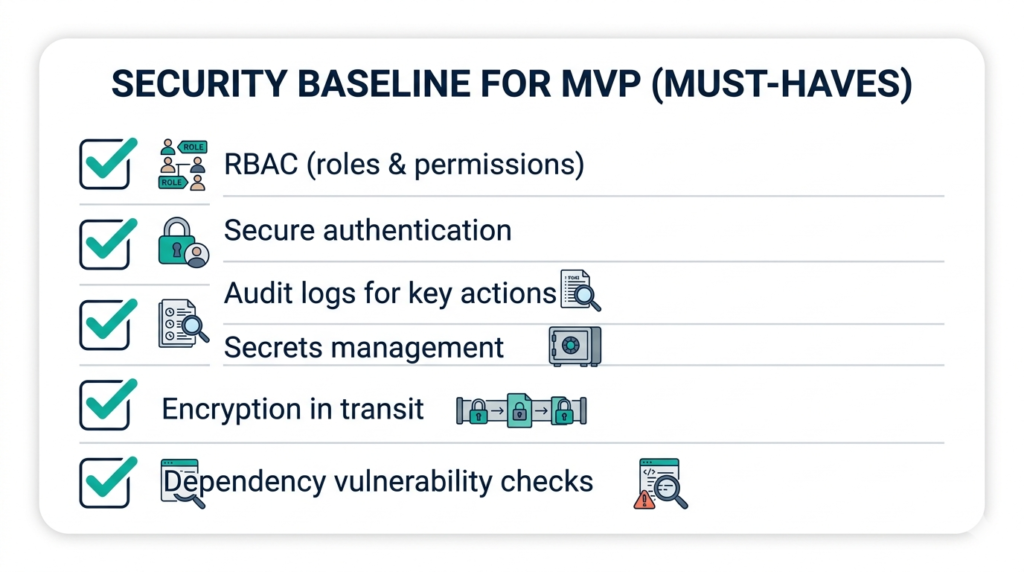

Fix: Define a baseline security model early: role-based access control, secure authentication, secrets management, and audit logs for critical actions. Implement it alongside core features, not after them.

Prevention: Treat security as product quality. A simple baseline early is cheaper than a “security retrofit” later.

Mistake 8: Adding AI without data readiness

Symptoms: AI features don’t perform reliably. Outputs are inconsistent. Teams spend weeks tweaking prompts or models with little progress. Stakeholders lose confidence.

Root cause: AI success depends on data quality, evaluation metrics, and operational controls. Teams often start AI work because it sounds innovative, but they haven’t defined what “good output” means or whether the required data exists and is clean.

Fix: Start AI work with a data and evaluation plan. Define success metrics, establish ground truth where possible, and design safe human-in-the-loop workflows for early releases. Plan monitoring from day one.

Prevention: If AI is on your roadmap, approach ai software development agency like a product capability—not a demo feature. Data readiness and evaluation are the real timeline drivers.

Mistake 9: No clear ownership or slow decision cycles

Symptoms: The team waits for approvals. Feedback arrives late and conflicts. Priorities change weekly. Sprint goals are missed even when development is strong.

Root cause: Projects fail when there isn’t one decision-maker who owns scope and trade-offs. Without a clear product owner, every stakeholder becomes a mini owner, and the backlog becomes political.

Fix: Assign one accountable product owner. Set a weekly demo cadence and a fixed review window for feedback. Keep requirements and decisions in a single source of truth.

Prevention: If you can’t decide quickly, you can’t ship quickly. Speed is often a decision-making problem, not a development problem.

Two small “do this first” checks

- Before development starts: confirm MVP boundary + acceptance criteria + integration list + risk list.

- Before launch: confirm regression testing + monitoring + rollback plan + security baseline.

FAQs

Isn’t this too much process for an MVP?

No. A short discovery, clear acceptance criteria, and sprint demos are not bureaucracy. They reduce rework and help you ship faster.

Which mistake is the most costly?

Skipping discovery and unclear “done” definitions cause the most rework. Late security and underestimated integrations are the next biggest budget-drainers.

How do we reduce delays without adding people?

Tighten scope, speed up approvals, define acceptance criteria, and test continuously. These changes reduce rework, which is where time disappears.

Most custom software problems aren’t technical mysteries. They’re predictable failure patterns: unclear scope, late testing, poor integration planning, slow decisions, and security added too late. Fixing them doesn’t require more meetings—it requires better clarity at the right moments. If you protect your MVP boundary, define “done” clearly, and keep weekly demos and QA discipline, you’ll ship faster and with far fewer surprises.